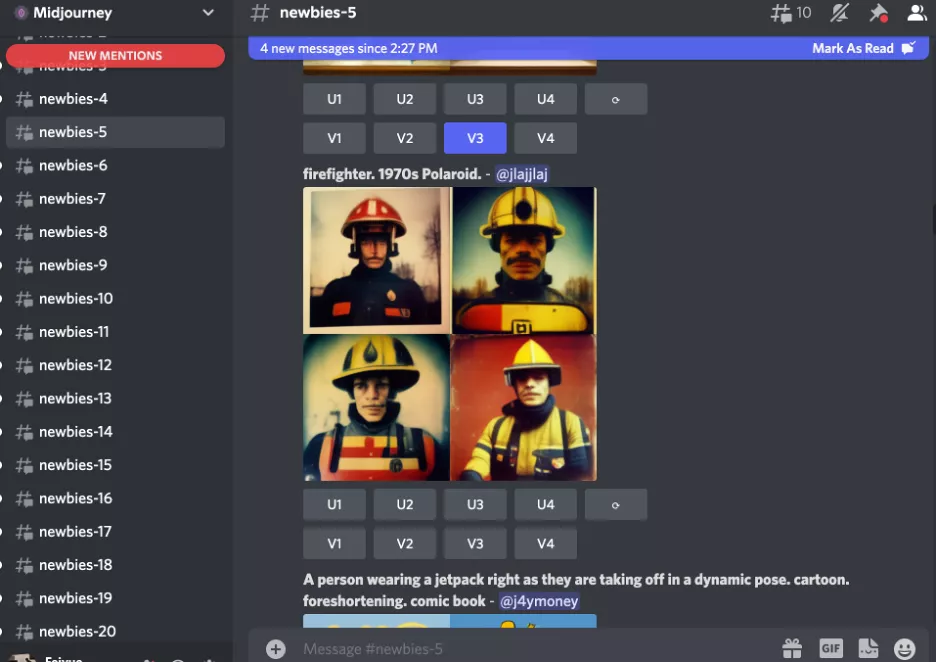

"A lemon lounging on the beach with sunglasses" - Artificial Intelligence please create

Let's talk about the recent craze for artificial intelligence (AI) art creation. At the end of May (and later in June), when the AI International Forum at Ai Factory was taking place, there was another rather related and important event taking place - two major AI image generation software, DALL-E-2 and Midjourney, both started open beta internal invitations. Members of Dune were also invited by Midjourney to enter the beta Discord community and were able to observe the generation, filtering and tuning of countless images, as well as try out their own input of prompt words (prompt) to generate AI images.

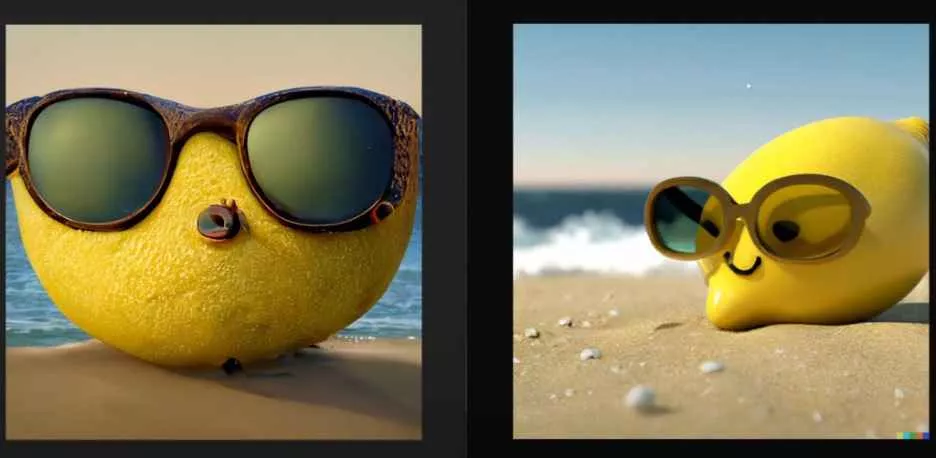

We expected the AI image generation interface to be a simple prompt input box with an image generation page - similar to the Google image search page, except that 'search results' were replaced with 'generated results'. In reality, however, all Midjourney invited test recruits will join a Discord community, which is further subdivided into fifty 'new people' within the larger community. When a newcomer joins, Midjourney's bot will first automatically send out a message in the "announcement group" designating so-and-so to the XXth newcomer group.

In this 'group chat' mechanic, the user will enter a prompt in the appropriate format - for example, "a lemon wearing sunglasses, lounging on the beach, photorealistic style" - and the bot will reply in the group chat screen about a minute later with the four AI images generated in accordance with the prompt, and mention (@) the newcomer in a new message. Notably, this means that all user-requested images-whether the prompt words are entered or the images generated-will be visible to everyone.

Screenshot of Midjourney's Discord community. On the left are the different channels of the new crowd, the prompt for the image shown on the right reads 'Fireman, 1970s Polaroid style', the U1 below the image represents an upscale of the first image, the VI represents a further variation of the first image, and so on. Image credit: Author. Based on this, the user can further make a selection of the four images obtained, asking for other variants (variation) to be made to one or more of them, or to enlarge the size and increase the resolution (upscale). Interestingly, because all these steps are in a group chat interface, all users can pick the images requested by other users, and the bot will respond to each of these requests by posting them in the group chat.

We were very interested in the form of interaction/organization chosen by the Midjourney team. I have to admit that the group of fifty new messages scrolling one after another was very powerful, the sheer volume of information and the ever-increasing rate of accumulation was destined to be too much for any single human brain to keep up with - and the mechanism was a little disorienting for newcomers at first. But as we adapted, we came to appreciate the beauty of the format - it was as if we were in the middle of a huge experimental public art project, something that a single-point, individual user-centric interface (like Google Images' search box) cannot match.

Same cue phrase: "A lemon wearing sunglasses, lounging on the beach, photographic grade realistic style." On the left is the generated image from Midjourney, on the right is from DALL-E-2. Image credit: MattVideoProductions.First, this constant volume of images rolling and coming at one like a flood or snowball is perhaps one of the key characteristics that AI art is trying to convey to us - No human artist, or team of human artists, can so heavily and quickly respond to the 'client's' requests and keep producing different variants that are further modified and amplified, twenty-four hours a day, endlessly.

Secondly, the mechanics of this group chat also make the identities of inputters, viewers and AI bots equal for the first time ever, and with blurred boundaries. There's no author-viewer dichotomy here, and attribution rights seemingly go nowhere - whose work is a stunning image really? Is it the person who typed the initial cue word? Is it the AI bot? Was it an algorithm engineer on the Midjourney team? Was it another user who helped choose a variant midway through or asked for a larger size? It was a collaborative, decentralized process by multiple parties.

Third, each user is constantly seeing other users' cue words and new AI-generated images, which also constitutes a workshop-style venue for constantly learning from others how to better and more creatively enter cue words. Also, when seeing images requested by others come up and sifting through them is essentially helping the Midjourney team volunteer to train their algorithms. All of this also begs the question, unheard of in the days of human artists, of who is really benefiting from the reciprocal communication of AI creation? Between the architects, the inputters, the screeners, the audience, and the machines, who is really training whom and who is learning from whom?

Prompt word: "A Japanese woman sitting on a tatami mat, photographic grade realistic style." Generated image of Midjourney. Image credit: Author. In fact, these issues were much talked about at the 2022 International Forum on Art and Artificial Intelligence at Ai Factory. We thought it would be a good opportunity and time to write our own thoughts. Ai Factory's forum is themed 'Artificial Imagination', with guests from the fields of art, design, literature, computer science and philosophy sharing and discussing the topic (for specific information about the forum, click here to jump). The Dune Institute was also invited to participate as a special observer.

But, as listed above, we don't have a declarative view on this, but rather want to share something we are thinking about in the form of a question.

After trying out the internal testing of AI images, the members of Dune and our friends at Media Lab heartily exclaimed: Such a technological revolution may have no less impact on images and creativity than photographic technology had on painting a hundred years ago. As Benjamin quoted Paul Valéry at the beginning of his famous work, "The Work of Art in the Age of Mechanical Reproduction".

The great technological innovations that are developing in the world will change the whole technique of artistic expression, which will inevitably affect the creation of art itself, and will eventually lead, perhaps in the most fascinating way, to a change in the very concept of art.

For Benjamin, the rise of cinema at the time made art less of a collector's item removed from the masses, because its nature was inherently popular. And today artificially intelligent art platforms seem to make everyone a creator. On the other hand, the redefinition of the image seems set to further reshape the nature of our relationship with the world; after all, vision is the (most) primary channel through which humans perceive the world. Just as the placement of the 'camera' in film creates a new way of seeing and empathizing with the viewer, the artificial intelligence in AI art seems to offer us a different way of thinking about human creativity.

01 Is imagination and creativity unique to humans?

For many people, the terms 'artificial' and 'imagination' are destined to be a contradictory set; 'artificial imagination' simply cannot exist, and there is no room for comparison or discussion. The word 'artificial' points to 'man-made' and 'artifacts' as opposed to imagination, which seems to be innate to man, 'natural' rather than 'manufactured'. Moreover, imagination is often considered to be a uniquely human ability that distinguishes us from other non-human 'things' - be they natural plants and animals, organic and inorganic, or man-made objects as diverse as tools and machines.

This view of dominance is particularly prized by anthropocentrism because people gain subjectivity through this unique creativity. In both the Renaissance and heroic modernism, we see many 'standalone genius'. These artists, architects, and writers are widely known, and the aura of genius distinguishes them from their creative and living collaborators; their creative powers are mysterious (or sacred, if you will) - their biographies, works, processes, and techniques are studied by later generations, but their imagination and creativity are a priori or transcendent. Such a capacity is like a divine descent, belonging only to themselves; a mysterious and infinite black box that others cannot pierce, much less replicate. Because of this, the creators of these geniuses, as individuals, were separated from their contemporaries, as if they "existed alone".

Cue word: "A lemur in the middle of a star cluster map" Generated image of Midjourney. Image credit: Author. However, both Object-Oriented Ontology (OO) and posthumanist studies of art, design, literary practice and philosophy challenge this anthropocentric perspective. In the forum, guests also critiqued and reflected on different aspects of this idea. For example, in Xu Yu's sharing, he emphasized that 'imagination' itself has an 'artificial' component by paraphrasing Kant, as the process of image formation (image formation) always involves artificial systems such as 'symbols', while Joanna Zielinska also cited posthumanist scholar Claire Colebrooke to critique the idea of humans as the sole creators of art.

This question is not only central to understanding the artificial imagination, but furthermore becomes a reflection on the human imagination. In her sharing, Joanna Zielinska presented images drawn by the 'Senseless Drawing Bot' by Japanese designers Sugano So and Yamaguchi Takahiro - images that at once resemble a child's scribbles and have a highly similar qualities.

For Joanna Zielinska, rather than seeing this work as a parody of human graffiti, it might be understood as a rethinking of human creative behavior - and perhaps human creativity does not come from human reason and subjective agency. All this makes the proposition that 'imagination is natural rather than artificially made' less stable.

"Senseless Drawing Bot" designed by Sugano So and Yamaguchi Takahiro. Photo credit: Yohei Yamakami 2011.

Se Thombray "God of Wine" series (2005), which art critic Arthur Danto has called these paintings "works of drunken revelry," the kind of intoxication that only a god could achieve. Photo credit: Rob McKeever / Gagosian Gallery.02 Who owns the attribution and autonomy?

Today, digital literacy (digital literacy) is almost a necessity for a new generation of humans. mechanical, digitally reproduced image materials produced by AI also provide new stimuli and raw materials for human artists who have almost exhausted their creative possibilities today. The artificial imagination is both autonomous (autonomous) and ubiquitous (ubiquitous), and its aesthetics are dazzling.

But developers and artists clearly don't stop at viewing AI art as a pool of inspiration that can be continually expanded and grown. We also wonder, if imagination is not unique to humans, whether AI can create independently? In the forum, we saw several artists and designers sharing work produced by AI as co-creators, but what would a work of art done solely by humans look like?

Prompt word: "American suburban home, 1960s collage advertising style." Image generated by MIdjourney. Image credit: Author. This is obviously still difficult. Artificial intelligence comes from people, and the imagination and creation of existing AI has been accompanied by humans like parental care throughout the entire process. What makes artificial 'attribution' most problematic is that, first of all, the library of machine algorithms for learning and training is still specified by humans, and the outputs are finally selected by humans. It still needs to be 'processed' by humans before it can be 'digested' by the human eye.

At the forum, Yuquan Liu recounted that he tried to use AI to learn his own writing to create new texts, only to find that the results were not amazing and even difficult to borrow. He had to revise it significantly, adding many of his own passages, and eventually published 50 Things Every AI Working with Humans Should Know. Similarly, the aesthetic produced by the algorithm through analysis of recommendations is straightforward and similar, and sometimes jumpy. Even so, many designers have consciously gone about collecting these images, editing and integrating them into new galleries that serve as moodboards (moodboards) for their own creations.

In addition to the production of images we mentioned at the beginning of the article, AI can also further process existing images to create new creations within a certain style. It turns the image creator's style into a filter that is added to other images. For example, by entering the content of an image into the AI art site Dream and selecting "Ghibli style", the newly generated image shows a similar fantasy animation style, while converting to a surrealist style results in an image similar to a Dali painting.

Left: results after entering the prompt "Dune, Ghibli style"; right: results after entering the prompt "Dune, surreal style". Image credit: Author. The user provides the proposition, and the AI, as the outputter, produces the new image. Or is it that the user provides the content and the AI puts it into the frame of someone else's style, producing a new image. So is the AI's identity in this output process an author or a tool? Who is actually the subject of this creation, the AI, the AI developer, the user, or the artist himself?

It might also be tempting to imagine that if no one trained the AI, or filtered the output, and did not only consider processing existing images with AI, could it produce some kind of more 'autonomous' work? Such a work might point to a more unknowable imagination, and the results might be beyond human understanding and appreciation. Philip K. Dick's "Do Bionic People Dream of Electronic Sheep? and Lem's The Star of Solaris offer us this paradigm: the imagination of the unimaginable.

03 Is the mass production of artificial intelligence a creation?

By analyzing the vast amount of image data searched, the AI extracts existing artistic styles, object shapes, and character traits and integrates and outputs them, and new images are created. In the AI Image Insider community we are a part of, new cues and new images are constantly being created, selected, iterated and developed, giving us a strong sense that rather than a 'production', it is a 'reproduction' with numerous variations and selections. Reproduction.

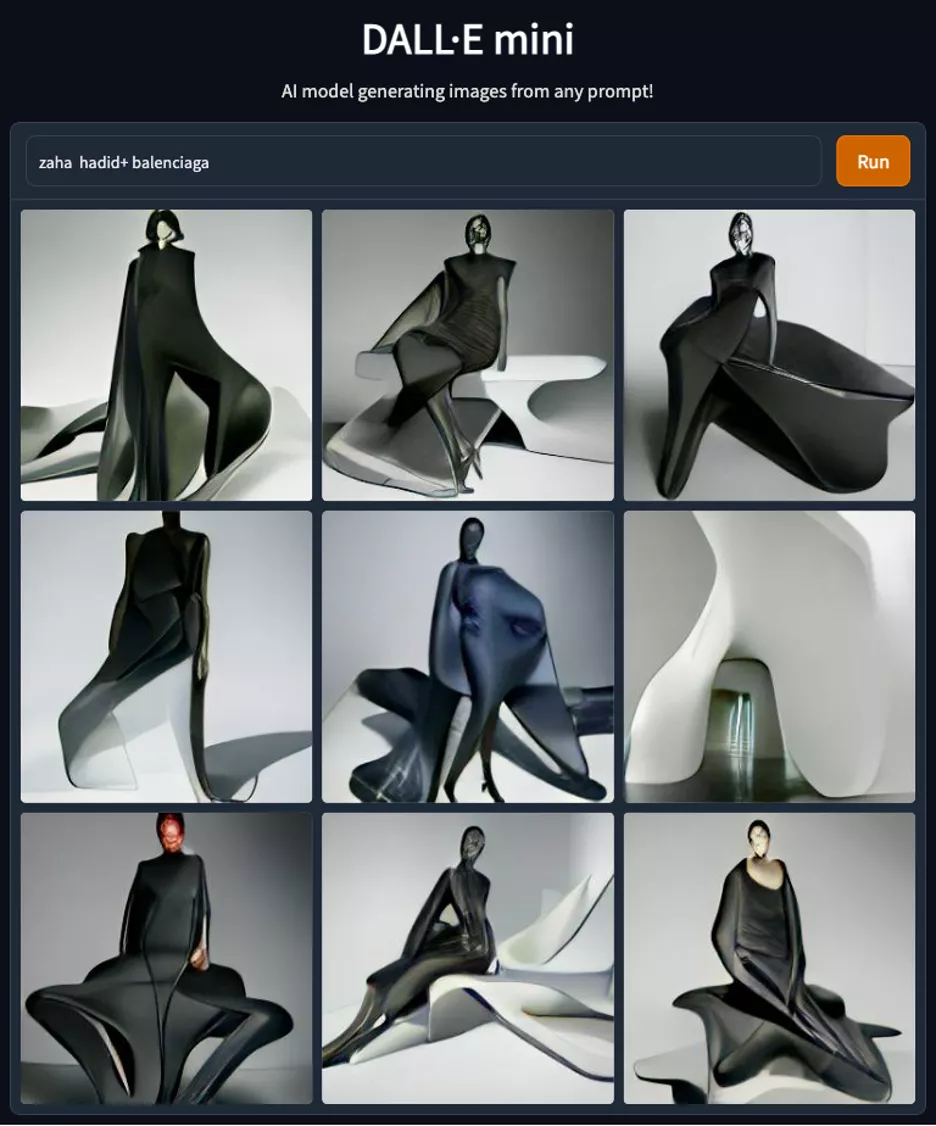

These images also simulate images of creations that do not exist in the real world. For example, we can superimpose architects and fashion brands with strong styles like "Zaha Hadid" and "zaha hadid + balenciaga" in DALL-E, Midjourney, or other AI image-generating software to get a series of garments with both silhouette and smooth curvature --A singular image that strongly combines the two genes. This 'atlas' of nine or four new images is just the right way to steadily establish a new creative discourse, as if there were a hybrid designer in the world. In the same way, we can hybridize the words of food and tools, architecture and art, painting and photography, and so on, to create new 'artifacts'. Pictorial realities of the electronic age begin to multiply freely, apart from our physical reality. Are these new images, which multiply infinitely and autonomously, 'works' created by artificial intelligence?

Nine images produced in DALL-E mini using 'Zaha Hadid' and 'Parisienne'. Source: huggingface. Indeed, in terms of our traditional understanding of art, this kind of creation can easily be seen as 'reproduction'. You could say that it simply builds on examinable images, reinventing and collaging pieces that have already established a strong style.

If we believe that the starting point for creation is imagination, the original human heart and instinct to create art, then when AI recycles some existing artwork, is this reproduction also considered new imagination? Is this just a mapping of our imagination? And on the flip side of that question, if we think what AI does is not new, how can we argue that human imaginary objects are new, and not a recombination of multiple pre-existing elements?

04 Is Artificial Intelligence better at image processing than word processing?

AI creation may be like a mirror of human creation, with its dangerously appealing creative life.

There is often another important thing on this mirror - the filter. In fact, 'Filter' means both a filter and a filter, both of which are especially critical to AI art. In photography, we are familiar with the use of filters - in the pre-production stage, photographers can add polarizers of different shades and reflections to the front of the lens to ensure that the light is as good as expected; in the post-production stage, photographers can also use processing software such as Lightroom to give the original film more different In post, the photographer can also use a digital filter such as Lightroom to give the original film a different style, such as "cyberpunk" with an emphasis on purple and yellow, or "vintage" with a lower saturation and yellowish color.

A filter that uses AI technology to turn images into a nighttime effect. Image credit: Cyanapse's Photorealistic Image Filters.

For the film Delete My Photos, director Dmitry Nikiforov used the image editor Prisma. By adding a "filter", the image creates a strong atmosphere or emotion, and it is often the key element that allows a photographer to create a personal style that quickly becomes recognizable to the public. It can be said that the addition of filters has changed the way photographers create their work. However, in traditional filter (re)creation, the atmosphere and emotion that comes from style rarely exists independently of the content of the work; it's like an add-on that adds to the icing on the cake outside of the subject.

This leads us to think about the relationship between image filters and text style. On the one hand, artistic filters are already very common in pre-post-processing of images, and AI has reached a considerable level of sophistication in filtering existing images - not just for light and dark, white balance and color, but also for modifying abstract lines in images, and for reorganizing the forms and brush strokes of people and objects. . But on the other hand, it still seems difficult to apply filters to text.

Liu Yukun also mentioned in his sharing that text seems to be difficult to generate and add some kind of 'style filter' through artificial intelligence. Perhaps the existing Internet ecology has been completely dominated by images, so the processing of text is no longer the most favored area for capital, but here, we are equally curious about the endogenous differences in stylization between images and text. Just as there are terms like 'Ghibli-esque', 'cyberpunk-esque', and 'retro-esque' for images, there are clearly strong aesthetic styles for different writers' texts and narratives. We might say 'Shakespearean', 'kafkaesque' or 'Orwellian', for example, but AI processing to add style to a piece of text is still very rare compared to the bustling image filter market.

We might make some conjectures: For AI development, in contrast to the clear overlay relationship between images and filters in image processing, the style of the text seems to be not just an add-on to the text itself, but is itself dissolved in the text, and cannot simply be stripped away. The Kafkaesque style is not entirely due to the author's preference for a particular pairing of words or preference for a certain dialectal expression, but rather, one could say that the world he creates, and the general situation of the characters on which his narrative is built, constitute his unique style. Similarly, the Orwellian style lies not in the particularity of his diction, but in his understanding and portrayal of a certain totalitarian system. If the AI were to learn a lot about such texts and extract a 'Kafkaesque' or 'Orwellian' filter that could be easily applied to any text given by the user, perhaps the difficulty would be how to avoid such processing being lame by stopping at superficial word imitation.

Stills from the movie "1984". Photo credit: Nineteen Eighty-Four. But literature is also not virgin territory where AI creation has not ventured. Among texts, poetry is already a relatively successful use of AI and algorithms in creative writing. By comparison, both fiction and non-fiction creations require the author to stitch together a plot or reflection into a readable, back-and-forth whole, but poetry seems to be exempt from AI's efforts at this step. By dismantling and reorganizing some words and phrases, many AI-created poems combine imagery that is not commonly or often juxtaposed, and the leaps therein are once again left to the imagination of the human reader. This, in turn, can provide a different kind of inspiration for human authors.

However, poetry may be a medium that is closer to the way images are created in the creation of words. We remain curious about how AI will move forward in the field of literature: Can it reshape the way we tell with the help of words in the same way that it reshapes the way we see the world with the help of images? Through deep learning, will AI be able to improve the sense of chained sequences between elements, to have a filter like 'Shakespearean (Shakespearean)', 'Kafkaesque' or 'Orwellian' in terms of storytelling? If AI is better with images after all, will the narrative of a film, cartoon, comic or graphic novel be mastered by AI in the first place?

Another question to ponder about the relationship between words and images is the way we start creating them - several of the major AI image generation models we've presented still tend to start with a piece of human input in the form of a textual prompt (prompt), which is then converted to an image by the AI. And is this an optimal, or most humane, way to go about it?

We know that human image creation often begins with a simple form, a vague feeling or a fragment of a remembered act, which cannot even form a clear written description, but it is from this wonderful haze that the creation of images by painters, directors, etc., begins. The work of Sé Tombré, mentioned earlier, often brings a sense of subconscious scribbling, and the beginnings of his creations approach a natural act that precedes language or even a complete image. The current models of AI image generation still take words as their beginnings, which would also seem to construct a new mainstream way of creating art in the future - but is this only an understanding, and a very engineer-like one at that, and will we lose the imagination of some other kind of imagination as a result? Of course, there is AI software that generates images by sketching them, however we are more curious about the new work that can come from a more diverse approach to creating text and image based work.